Claim triage

First-pass classification can route obvious cases quickly while flagging ambiguous images for manual review.

A polished end-to-end computer vision system for classifying car damage across 12 real-world categories.

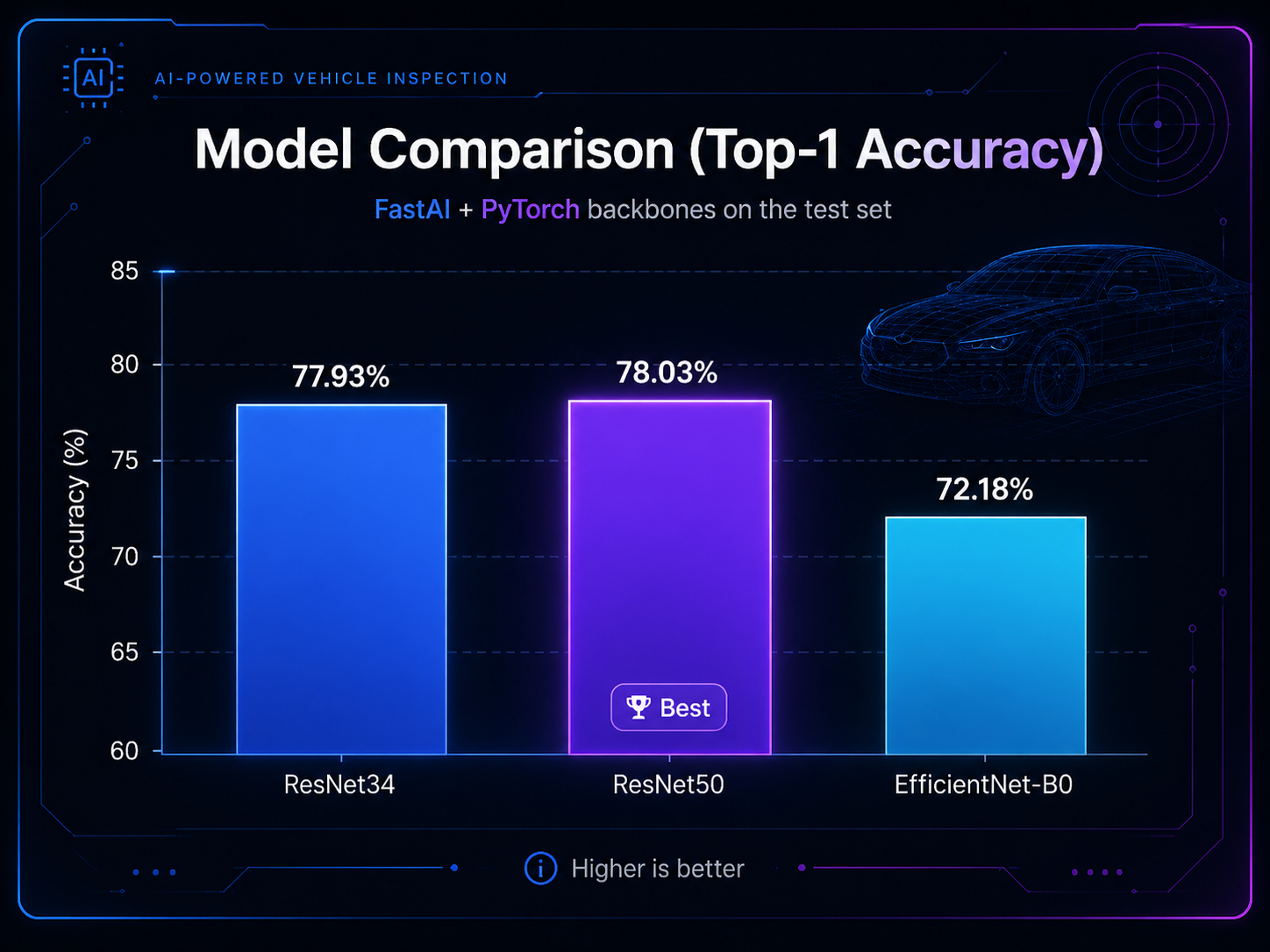

The project trains and evaluates transfer-learning models with FastAI + PyTorch, selects ResNet50 as the best backbone at 78.03% top-1 accuracy, and deploys the model through HuggingFace Spaces with a GitHub Pages web demo.

Problem context

Vehicle damage inspection is a high-volume visual workflow where consistency, speed, and traceability matter. This project frames the model as an applied inspection assistant rather than a generic image classifier.

First-pass classification can route obvious cases quickly while flagging ambiguous images for manual review.

Standardized category predictions help document vehicle condition across repeated inspection workflows.

Structured predictions make repair intake faster by turning uploaded photos into searchable damage labels.

Dataset categories

The dataset covers cosmetic, structural, environmental, tire, glass, and no-damage cases across 4,500+ labeled images.

Evaluation

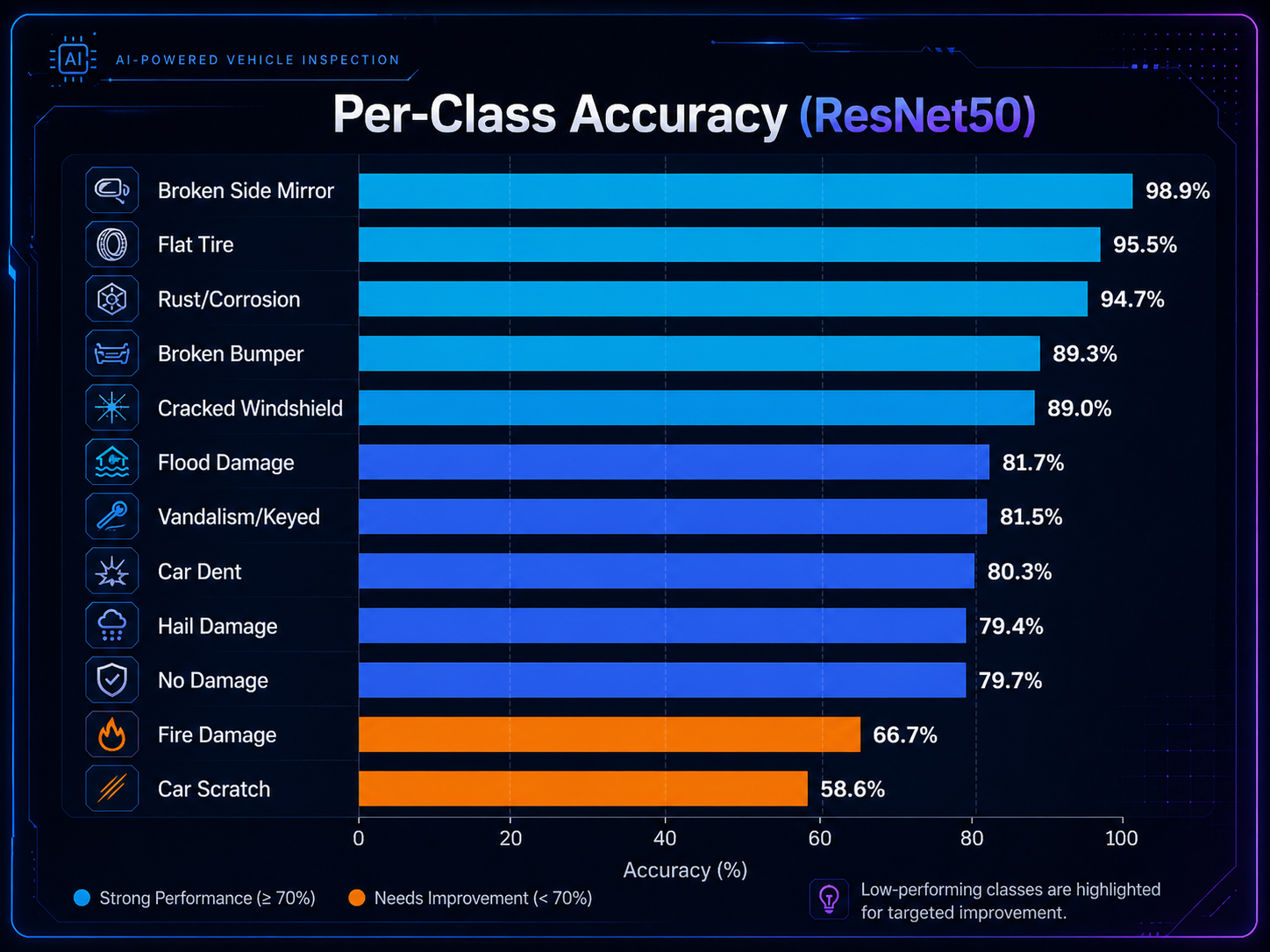

ResNet50 is the strongest model, but the detailed class-level view shows where the real inspection difficulty sits: subtle scratches, ambiguous dents, and context-dependent damage.

ResNet50 narrowly outperforms ResNet34. The small gap suggests the task is constrained by visual ambiguity and label overlap, not only backbone capacity.

Visually distinctive classes are strongest. Thin or ambiguous surface-level defects remain the hardest classes.

Strongest classes: Broken Side Mirror (98.9%), Flat Tire (95.5%), Rust/Corrosion (94.7%), Broken Bumper (89.3%), Cracked Windshield (89.0%).

Most challenging classes: Car Scratch (58.6%) and Fire Damage (66.7%). These categories vary heavily in scale, lighting, texture, and context.

This is the research value of the project: the presentation does not stop at top-line accuracy; it shows failure modes that matter for deployment.

The confusion matrix exposes semantically meaningful errors between visually similar damage classes.

Methodology and pipeline

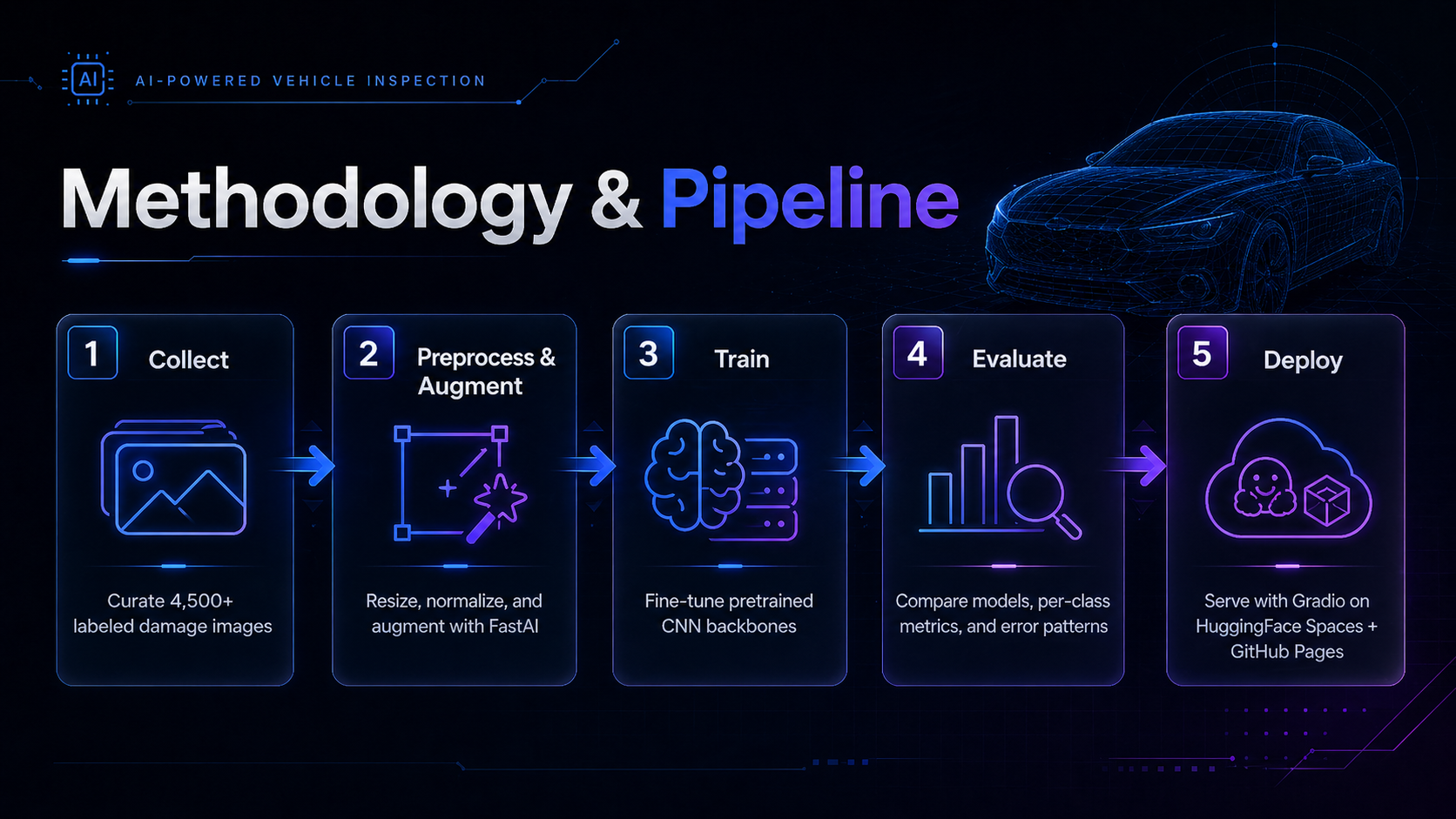

The workflow follows a practical applied ML pipeline: collect, clean, augment, train, evaluate, export, and deploy.

Build a labeled image dataset across 12 vehicle condition categories.

Split data, resize images, normalize inputs, and apply FastAI augmentations.

Fine-tune ResNet34, ResNet50, and EfficientNet-B0 with PyTorch-backed FastAI.

Compare model accuracy, inspect per-class behavior, and analyze confusion patterns.

Export the best model and serve inference through Gradio on HuggingFace Spaces.

Repository

The repository separates notebooks, deployment code, model artifacts, generated documentation assets, and the GitHub Pages frontend.

car-damage-classifier/

|-- deployment/

| |-- app.py

| `-- requirements.txt

|-- models/

| `-- CarDamageClassifierV1.pkl

|-- notebooks/

| |-- data_preparation.ipynb

| `-- TrainingAndCleaning.ipynb

|-- docs/

| |-- index.md

| |-- car_damage.html

| `-- assets/

| |-- charts/

| |-- confusion-matrices/

| |-- samples/

| `-- sections/

|-- scripts/

| `-- generate_charts.py

`-- README.mdDeployment

GitHub Pages presents the project and demo UI. HuggingFace Spaces hosts the Gradio inference backend and model runtime.

Interactive Gradio deployment for real-time image classification using the exported FastAI model.

Space: wrezachow/car-damage-classifier

Model: models/CarDamageClassifierV1.pkl

Research-style landing page plus a browser demo that calls the Space API through @gradio/client.

Landing: docs/index.md

Demo: docs/car_damage.html

Quick start

Use the deployment requirements and exported FastAI model to launch the Gradio app on your machine.

git clone https://github.com/wrezachow/car-damage-classifier.git

cd car-damage-classifier

python -m venv .venv

.venv\Scripts\Activate.ps1

pip install -r deployment/requirements.txt

python deployment/app.pyTech stack

The stack is intentionally pragmatic: mature transfer-learning tooling for training and simple hosted deployment for inference access.